Qwen2.5-Omni, la superinteligencia artificial hecha en China

In the dizzying world of artificial intelligence (AI), China has emerged as a leading player, and its latest innovation, Qwen2.5-Omni, is proof of this. Developed by Alibaba, this multimodal model is designed to understand and process various types of information, such as text, images, audio, and video, and respond fluently in both text and voice.

What makes Qwen2.5-Omni special?

Unlike other AI models that focus on a single modality, Qwen2.5-Omni is capable of handling multiple forms of data simultaneously. This means it can analyze an image, interpret a snippet of audio, or understand a video, and then generate a coherent response in text or through a natural voice. This ability makes it a versatile tool for applications that require real-time interaction and deep understanding of different types of content.

Real-time interaction

One of the standout features of Qwen2.5-Omni is its ability to maintain real-time conversations. Thanks to its innovative architecture, it can process information in parts and generate responses immediately, facilitating more natural and fluid interactions with users.

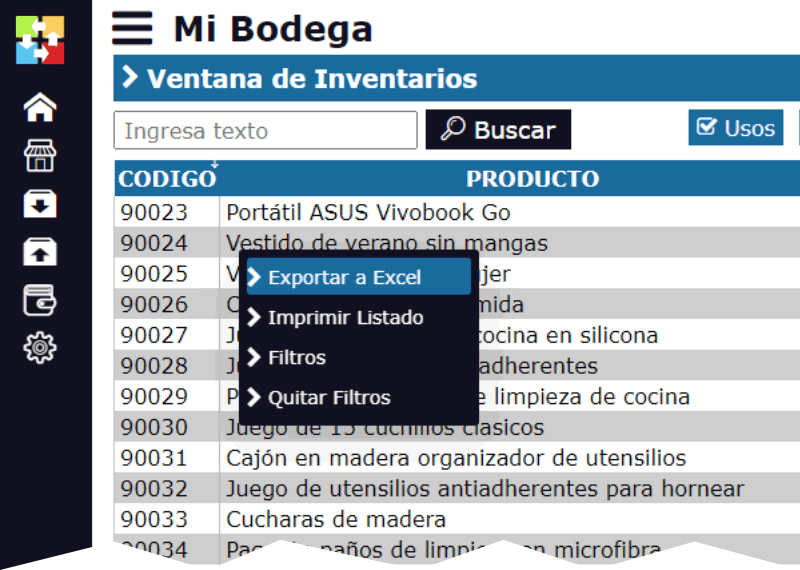

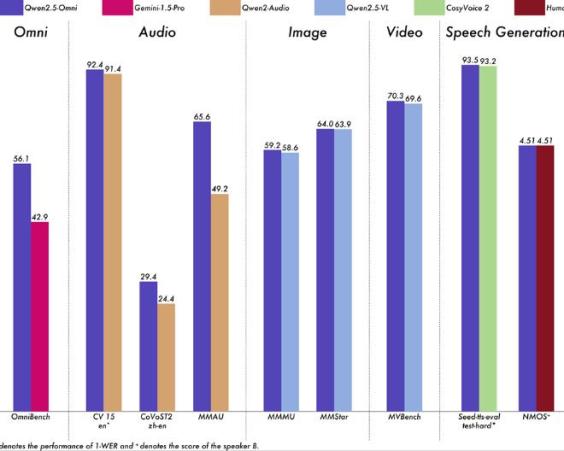

Outstanding performance

In comparative tests, Qwen2.5-Omni has demonstrated superior performance in various tasks compared to models of similar size. For example, in evaluations of voice recognition and understanding of images and videos, it has outperformed other open-source models, highlighting its effectiveness and accuracy.

Commitment to the community

Alibaba has made Qwen2.5-Omni available to the developer community as an open-source model. This allows researchers and companies worldwide to explore its capabilities, integrate it into their projects, and contribute to its continuous improvement. The openness of this model fosters collaboration and accelerates the advancement of AI globally.

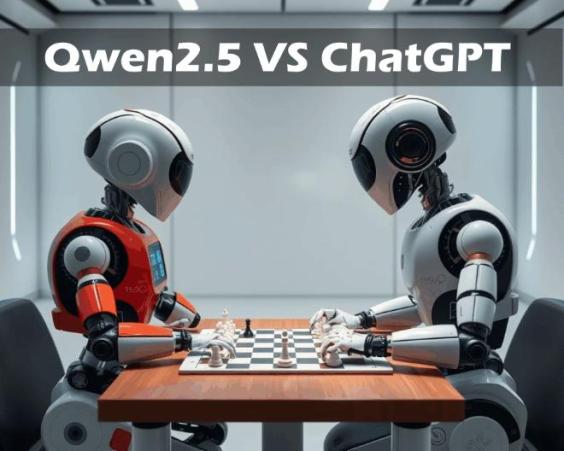

Qwen2.5-Omni VS ChatGPT

In the realm of artificial intelligence, the comparison between Qwen2.5-Omni and ChatGPT is inevitable. While ChatGPT, developed by OpenAI, has been recognized for its ability to generate coherent text and maintain fluid conversations, Qwen2.5-Omni, created by Alibaba, stands out for its multimodal approach. This means that, in addition to processing and generating text, Qwen2.5-Omni can interpret images, audio, and video, providing more comprehensive and contextualized responses. Although both models are powerful in their respective areas, the versatility of Qwen2.5-Omni in handling multiple types of data gives it a significant advantage in applications that require a broader understanding of the environment.

Qwen2.5-Omni VS DeepSeek

On the other hand, the competition between Qwen2.5-Omni and DeepSeek has caught the attention of the tech industry. DeepSeek, a Chinese startup, surprised the world with its DeepSeek-V3 model, known for its efficiency and low development cost. However, Alibaba responded with the launch of Qwen2.5-Max, an advanced version of its AI model, claiming it outperforms DeepSeek-V3 in various performance tests, including general knowledge, programming, and problem-solving. This rivalry reflects the dynamism of the AI sector in China and underscores the rapid evolution and competition among companies to lead in innovation and technological capabilities.

Conclusion

Qwen2.5-Omni represents a milestone in the development of artificial intelligence, highlighting China's ability to innovate in this field. Its ability to understand and generate multiple forms of content, along with its availability as an open-source tool, positions it as a promising solution for various applications, from virtual assistants to educational systems and more. Undoubtedly, we are witnessing the beginning of a new era in human-machine interaction, with Qwen2.5-Omni at the forefront of this revolution.